The Real AI Failure Mode: Data Quality at the Schema Layer, Not the Model

April 1, 2026

See Liquibase in Action

Accelerate database changes, reduce failures, and enforce governance across your pipelines.

Everyone talks about AI risk at the model layer. Bias in training data. Hallucinations. Explainability. Model governance. Model monitoring.

No one talks about the schema layer. And that's exactly where 64% of AI risk actually lives.

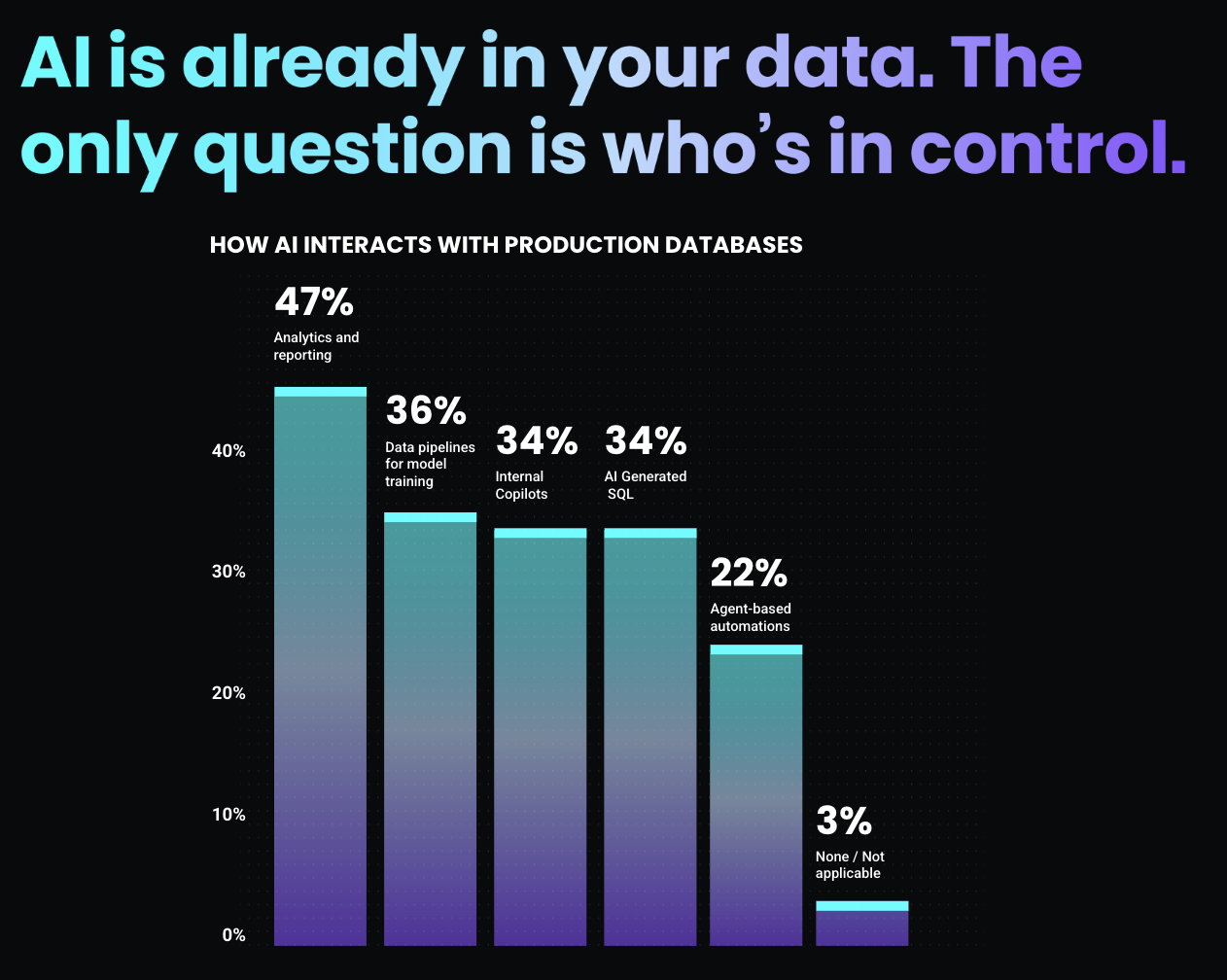

The 2026 State of Database Change Governance Report surveyed enterprise teams about AI-related risks around database change. The results were striking. They didn't worry most about algorithms. They worried about data.

Where AI Actually Fails

When asked about AI-related risks tied to database change, here's what organizations cited:

- 64% cited data quality issues as a top AI risk

- 34% worried about ungoverned AI-generated SQL

- 37% flagged regulatory non-compliance for AI workloads

- 39% pointed to schema drift disrupting pipelines

None of these are model problems. They're all schema problems.

Data quality issues. Ungoverned SQL. Schema drift. These are the exact failure modes that emerge when your database layer isn't governed. When changes happen without approval. When drift goes undetected. When lineage breaks.

Why The Schema Layer Matters More Than You Think

Your AI system is only as good as the data feeding it.

A model trained on clean, consistent, well-documented data produces reliable outputs. A model trained on drifted, inconsistent, undocumented data produces degraded outputs that look like model problems but are actually data problems.

Here's the scenario that plays out in production:

You deploy a model in January. It trains on data with specific characteristics: a certain table structure, specific column types, known relationships. The model learns patterns in that data. It ships. It works.

Then in February, a schema change happens somewhere in your data pipeline. A column gets renamed. A constraint is dropped. A table gets reorganized. No one documents it. No one approves it through a formal process. It just happens.

Your model retrains in March on data with different characteristics. It learns new patterns based on the changed schema. It starts producing different outputs.

To you, it looks like model degradation. You spend weeks debugging the algorithm. You check your training process. You review feature engineering. You check data distributions.

What you don't check is the schema. Because you didn't know it changed.

That's where 64% of AI risk lives.

Ungoverned AI-Generated SQL Makes It Worse

34% of organizations worry specifically about ungoverned AI-generated SQL. And they should.

AI-generated SQL is powerful. It lets teams ship faster. Models can generate the SQL they need instead of waiting for DBAs. Agents can execute queries dynamically. Copilots can draft changes.

But ungoverned AI-generated SQL is a liability. An AI system can propose changes that violate data quality rules. It can generate SQL that creates downstream inconsistencies. It can execute changes that break lineage or violate compliance requirements.

When that SQL runs without governance, without approval, without evidence, you have no audit trail. You have no way to explain why a change happened. You have no way to prove it was authorized.

The report found that the organizations most concerned about ungoverned AI-generated SQL are also the ones with the lowest governance maturity. Ad hoc controls. Manual approvals. Inconsistent enforcement.

They're right to be concerned.

Schema Drift Is Silent

39% cite schema drift disrupting pipelines as a top AI risk. And drift is the most insidious problem because it happens quietly.

A column gets dropped on production but not staging. A constraint changes on one platform but not another. A table gets renamed without updating all downstream dependencies. Someone runs a migration script on the data warehouse that doesn't get documented.

For traditional applications, drift creates reliability issues. For AI systems, it's worse. Drift changes the characteristics of the data your model depends on. Your model doesn't know anything has changed. It trains on drifted data, learns patterns that don't match the schema your application expects, and ships outputs based on assumptions that are no longer true.

Drift detection requires continuous visibility into what your schemas actually look like across all your environments. Not periodic checks. Not manual reviews. Continuous, automated detection.

Most organizations don't have that. Only 48% automate drift detection always or often. The other 52% catch drift after the fact, after the damage is done, after the model has been trained on bad data.

The Regulatory Pressure Is Coming

37% flag regulatory non-compliance for AI workloads as a top risk. That number is going to grow.

Regulators are starting to ask about AI governance. How are you validating that AI-generated changes are safe? How are you ensuring that AI-trained models use compliant data? How are you documenting the schema that your AI systems depend on?

Agencies are publishing guidance. The EU AI Act requires documentation of training data. The SEC is issuing rules on AI governance in finance. HIPAA is extending compliance requirements to AI in healthcare.

When auditors ask, "How do you ensure your AI uses compliant data?" the answer isn't "We have model governance." The answer is "our database layer is governed such that data quality and compliance are enforced at the schema layer before AI ever touches it."

Organizations without schema-level governance can't answer that question credibly.

Here's What Most Organizations Miss

They build AI governance on top of ungoverned databases. They assume the database layer is stable. They focus governance and monitoring on the AI layer: model validation, output monitoring, and bias detection.

But 64% of AI risk starts in the database. If your governance stack doesn't include the schema layer, you're monitoring the wrong layer.

How Liquibase Secure Secures Your AI Foundation

AI is only as good as the data feeding it. Liquibase Secure secures that foundation through five core capabilities:

The result: your AI teams can ship faster because they're not blocked by governance friction. Your compliance teams can prove governance because evidence is generated automatically. Your business can trust AI outputs because the data feeding them is governed and auditable.

The Question You Need to Answer

If 64% of AI risk lives at the schema layer, where is your AI governance today?

If it's sitting at the model layer while your database layer runs on ad hoc governance, you're monitoring the symptom while the disease spreads.

AI is only as good as the data. Secure the data layer first.

.png)

.png)

.png)

.png)

.png)